To fully leverage the high-performance capabilities of the core services, the ingress layer, or ‘front door’, must be engineered to meet the robust throughput requirements of national infrastructure.

In Mojaloop v17, we modernised the gateway layer to unlock the performance of the hub. By replacing earlier components with high-performance alternatives, we ensured that the edge of the switch can sustain national-scale volumes comfortably while providing a secure and efficient path to the core services.

The Engineering Problem

To maintain peak efficiency at national transaction volumes, the ingress and gateway paths must overcome the inherent physical constraints of high-concurrency processing. We focus on addressing four critical technical hurdles:

- Security Processing Intensity: Robust security protocols (TLS/mTLS) are non-negotiable for financial integrity, but they introduce CPU and latency overhead. At scale, this processing demand becomes a primary throughput constraint if the ingress path is not optimised for this.

- Indexing Friction in Data Structures: Absolutely, being able to uniquely identify a transaction is a prerequisite for a payment switch. Non-sequential identifiers lack a chronological orientation, which creates structural challenges within the database. This leads to fragmented data placement and high indexing overhead, making it difficult to maintain linear write performance as the national ledger expands.

- Protocol Header Synchronisation: International messaging standards, such as ISO 20022, enforce strict 35-character limits on transaction headers. Identifying a unique, high-performance ID format that fits within these bounds is essential to maintain global interoperability without costly data transformations.

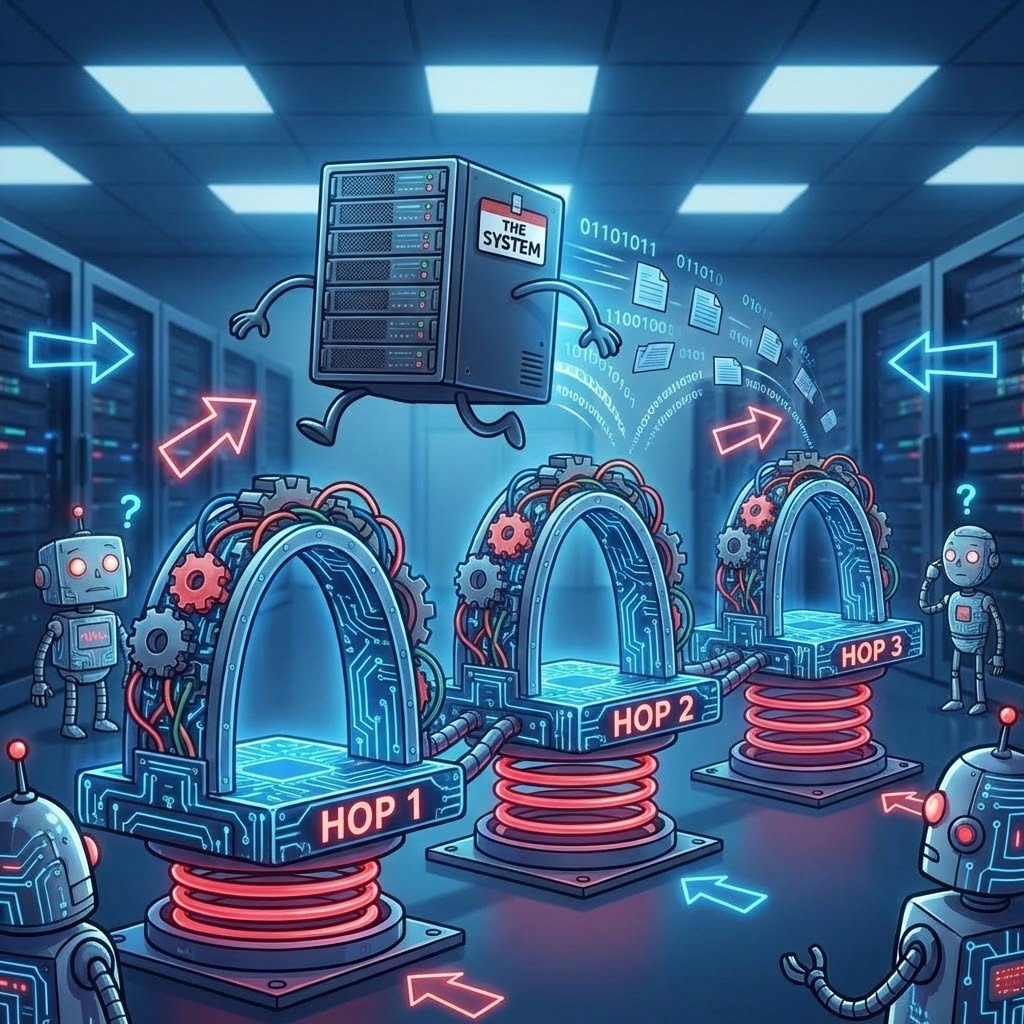

- Cumulative Request Path Latency: In complex distributed systems, every intermediary layer and security transform adds marginal latency. At sustained peak loads, these incremental costs compound, degrading connection persistence and limiting the system’s theoretical total throughput.

What we changed in Mojaloop v17 (high-level)

We made three significant architectural adjustments to the ingress and identifier processing to improve sustained throughput:

- Upgrade ingress components to support higher request rates. We have identified and integrated components specifically designed to handle the demands of high-velocity traffic.

- Adopt high-performance identifiers (ULID over UUIDv4). We moved from random UUIDv4 to ULID (Universally Unique Lexicographically Sortable Identifier) for internal transaction IDs. This seemingly small change had two massive impacts:

- Database Throughput: Because ULIDs are time-ordered, they allow databases to index data sequentially, leveraging the time-ordered nature of ULIDs to streamline database indexing. This transition significantly enhances write speeds and storage efficiency during high-concurrency periods by ensuring sequential data placement..

- ISO 20022 Compliance: ULIDs (26 characters) fit strictly within the ISO 20022 header constraints (often limited to 35 chars), whereas standard UUIDs (36 chars) do not; ensuring performance doesn’t come at the cost of interoperability.

- Streamlining the Request Path: We have optimised the architectural journey of each message, ensuring that every intermediary layer provides maximum value. By consolidating non-functional requirements, we deliver a more direct path to core processing without compromising system integrity.

The guiding principle is simple: every hop has a cost, and at sustained load, those costs add up quickly, therefore only design what is necessary and ensure it is optimised for efficiency.

Practical guidance for adopters

The Mojaloop Control Centre and Infrastructure as Code (IaC) frameworks include these best practices by default. If you are deploying via an alternative infrastructure, ensure your environment adheres to the following standards:

- Ingress Monitoring: Explicitly measure edge performance. Monitor gateway CPU utilisation, connection rates, and handshake overhead, focusing on the end-to-end latency at which 95% and 99% of requests complete.

- Connection Management: Prioritise connection stability under high load. Avoid performance “taxes” caused by improper timeout tuning or a lack of connection reuse.

- Security Baseline: Conduct all testing with security features enabled. Performance data gathered with security disabled is not applicable to production environments.

- Strategic Simplification: Exercise caution when reducing the number of network hops. Ensure that performance gains do not come at the expense of system observability or functional correctness.

How this fits in the series

This episode is about the ingress/gateway path. Related themes appear in other posts:

- Audit-path simplification (removing audit sidecars by publishing directly to the Kafka audit topic) is covered in Part 2, published on 2 March 2026.

- Kafka partitioning and message-flow design is covered in Part 4, coming up next week.

What’s next

Part 4 focuses on Kafka partitioning and handler concurrency, because messaging is “fast” only when ordering, parallelism, and the workload align.