The Mojaloop Core Team has recently completed the migration to EKS from Rancher for the Mojaloop Core Testing and Dev environments, so that time can be spent more effectively focused on fixing Mojaloop issues instead of maintaining the underlying K8s infrastructure!

Miguel de Barros has had the privilege of working on the Mojaloop OSS (Open Source Software) project as part of the Core Engineering team, in conjunction with the wider community to develop and maintain Mojaloop’s Core and Testing components.

In this blog, I will take you through how this migration reduced our maintenance costs and effort, how we achieved a better alignment to the Kubernetes release cadence, and how we utilized a GitOps approach utilizing CI Workflows to provision the environment and deploy supporting infrastructure services.

But let’s first start by looking at an overview of these technologies.

Technology Overview

What is Rancher?

“Rancher is a complete software stack for teams adopting containers. It addresses the operational and security challenges of managing multiple Kubernetes clusters across any infrastructure (i.e. on-prem, on-cloud, or Hybrid) while providing DevOps teams with integrated tools for running containerized workloads.” ~ Source

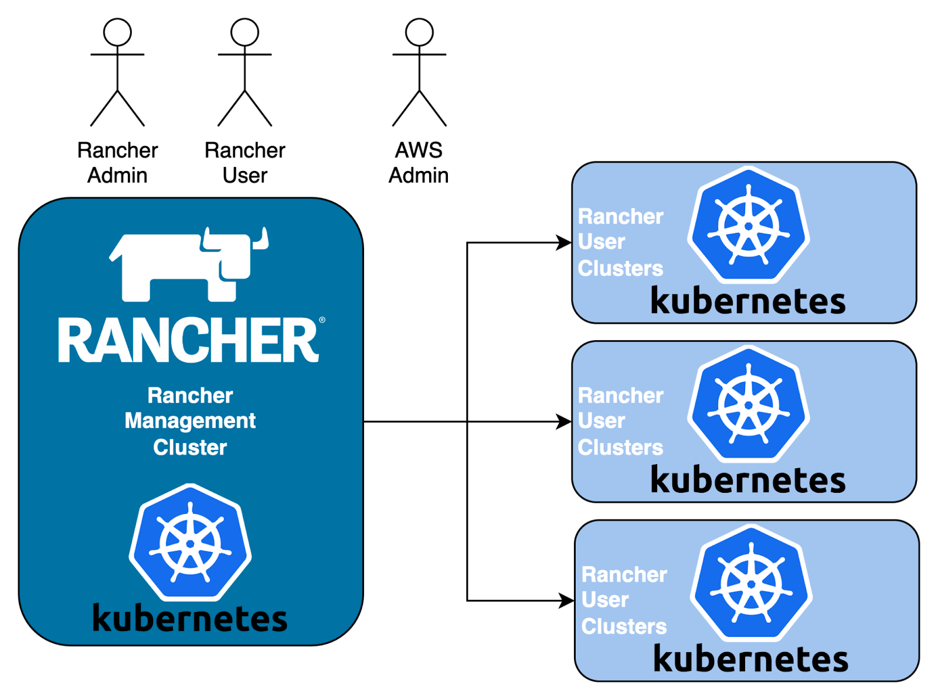

In the following architecture diagram, Rancher consists of:

- A Management Cluster which provides the Management API and Support Services to provision and manage User Clusters from on-prem to on-cloud infrastructure; and

- One or Many User Clusters can be used to run application workloads.

Click to expand

The infrastructure flexibility that Rancher provides is really the key reason why it was adopted by the Mojaloop OSS Core team several years back.

What is EKS?

“Amazon Elastic Kubernetes Service (Amazon EKS) is a managed Kubernetes service that makes it easy for you to run Kubernetes on AWS and on-premises. Kubernetes is an open-source system for automating deployment, scaling, and management of containerized applications. Amazon EKS is certified Kubernetes-conformant, so existing applications that run on upstream Kubernetes are compatible with Amazon EKS.” ~ Source

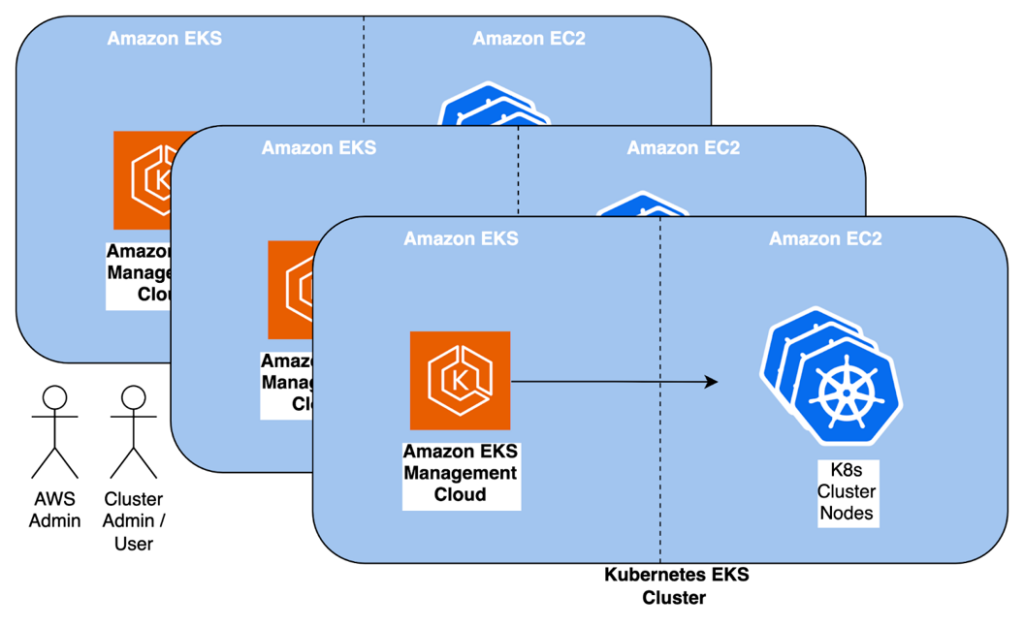

In the following architecture diagram, EKS consists of:

- One or more distinct clusters that are managed by the AWS EKS Management platform;

- Each cluster’s Worker Nodes are provisioned as either VM’s within AWS EC2, or optionally can be avoided or used in conjunction with AWS Fargate to directly run Kubernetes Pod workloads.

Click to expand

It is not too dissimilar from Rancher’s architecture from a high-level perspective, except that the Management Plane is managed completely by AWS itself, and putting the Fargate option aside, the Worker Nodes are provisioned in a similar manner within the EC2 space.

Reduced Maintenance

Even though Rancher does simplify the Management of Multiple Clusters and the management of each cluster, it still introduces some overhead:

- The Management Cluster; this is a fully-fledged Kubernetes Cluster (i.e. Management Nodes, and Worker Nodes) dedicated to run the Rancher Management Plane services;

- The User Clusters, this is also a fully-fledged Kubernetes Cluster, with it’s own Management Nodes, and Worker Nodes; and

- The AWS administration overhead to keep the underlying EC2 instances patched and up to date.

EKS on the other hand only requires minimal maintenance on the Worker Nodes, since one can choose to deploy either “Managed Nodes” or “Self-Managed Nodes”. With “Managed Nodes” being completely managed by AWS, with regard to OS patching and maintenance.

The combination of providing a fully “Managed” (i.e. hosted) Kubernetes Management Cluster Plane, and the provisioned “Managed Nodes” is exactly what the Mojaloop Core OSS Team needs in offloading our maintenance effort thereby allowing us to rather focus on maintaining the Mojaloop Core Services/Application instead of worrying about our QA/Dev’s underlying infrastructure.

This has resulted in substantial cost savings alone on man-hours, with AWS Service bills being reduced by approximately 60% in addition.

Closer alignment to the Kubernetes Release Cycle

Another important aspect of maintenance is how to keep Kubernetes up to date with its release cadence. This is especially important when considering the Mojaloop Design Authority’s (DA) decision (see my blog “Which Kubernetes Distribution should one use?” for more information) regarding what Kubernetes version and distributions should be used for testing and deploying Mojaloop.

It is worth noting that both Rancher and EKS provide the ability to upgrade the underlying Kubernetes version with minimal effort, but EKS’s support for Kubernetes is more closely aligned with the Kubernetes release cadence. Thus, this will assist the OSS Core Team to ensure that future Mojaloop releases are tested against the most recently “testable” Kubernetes release.

GitOps Approach

Since we had the opportunity to re-look at how we configure and deploy our Core OSS QA/Dev environment, we decided to adopt a GitOps approach. This means that we utilize a Git repo as our single source of truth to store our environment configuration. The additional benefit here is that our environment configuration is Source Control Managed (SCM), allowing us to easily manage and track any changes made to our environment configurations.

Since we are using Github, we also have the benefit of using GitActions to execute our CI Workflow Jobs to apply that configuration to our QA/Dev environment.

As part of this, we also wanted to drive our supporting infrastructure service capabilities via the Kubernetes cluster itself. The following supporting services were configured and deployed for this purpose:

- External DNS – Automatically configure external DNS services on Cloud Providers (e.g. AWS, Google, etc)

- Nginx Ingress Controller – Ingress-NGINX Controller for Kubernetes that supports Ingress routing for both on-prem and several Cloud Providers (e.g. AWS, Google, etc)

- Certificate Manager – Automatically provision and manage TLS certificates in Kubernetes, both external and internal

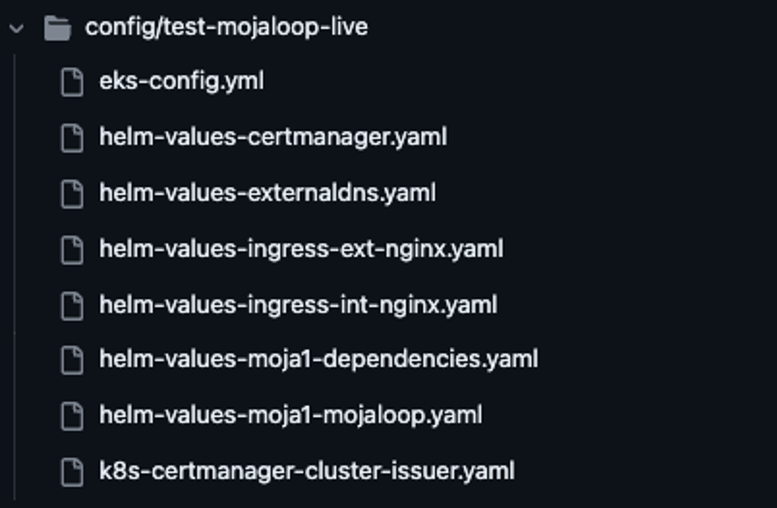

Our Environment configuration with our Git repo’s folder structure is split by each environment within the `/config` folder as follows (only a single environment `test-mojaloop-live` is shown here for simplicity):

Click to expand

In the above screenshot, you will see several configurations:

- EKS Cluster configuration itself, where we define the Kubernetes Cluster, NodeGroups, Policies, and IaM mappings;

- Helm configurations for supporting services such as External DNS, etc; and

- Custom Kubernetes Descriptors that need to be applied

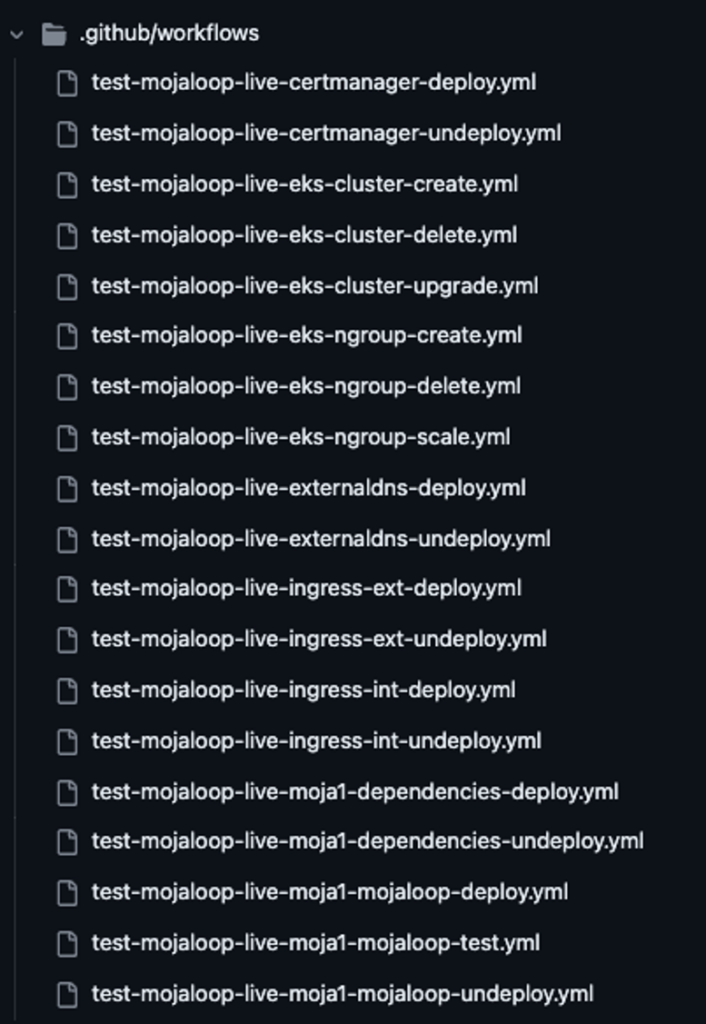

These configurations are then applied by our GitActions CI Workflow Jobs as shown in the next screenshot:

Click to expand

The above CI Workflow Jobs can be used to provision/deploy/create, upgrade, undeploy/delete, or even test our configurations for each environment.

Lastly, this means that anybody that has access to this Git Repo would be able to manage the Core OSS QA/Dev environments without needing to know detailed knowledge of Kubernetes or EKS.

Future Enhancements

We have a couple of future enhancements to our roadmap that we plan to work on going forward:

- Add supporting Kubernetes Services for monitoring and log aggregation

- Add support for GitOps Operators such as Flux CD or Argo CD

- Develop an EKS Module for Mojaloop’s Modularized IaC Framework that can be used by any IaC deployer that wishes to use AWS’s managed Kubernetes service offerings thereby giving us (and you) a standard approach to running Mojaloop on-prem or On-Cloud.

Conclusion

The Mojaloop Core Team has recently completed migrating to EKS from Rancher using a GitOps approach, which has helped the Core OSS Team realize the following benefits:

- Reduced Maintenance effort and costs.

- Utilize a standard approach for standing up the OSS Core QA/Dev environment, ensuring that future Mojaloop releases are more closely aligned with the Mojaloop DA’s guidelines for testing.

- Most importantly it allows the Mojaloop Core OSS team to focus on maintaining the Mojaloop Core Services instead of worrying about the underlying QA/Dev’s infrastructure.

If you are interested in learning more then please contact us.

References

- https://github.com/mojaloop/project/issues/3354

- https://www.infitx.com/which-kubernetes-distribution-should-one-use

- https://github.com/mojaloop/design-authority-project/issues/93

- https://www.rancher.com/why-rancher

- https://aws.amazon.com/eks/features

- https://github.com/features/actions

- https://about.gitlab.com

- https://en.wikipedia.org/wiki/Version_control

- https://kubernetes.io/releases/release

- https://fluxcd.io

- https://argoproj.github.io/cd